The State of AI in Software Engineering: A CTO Briefing

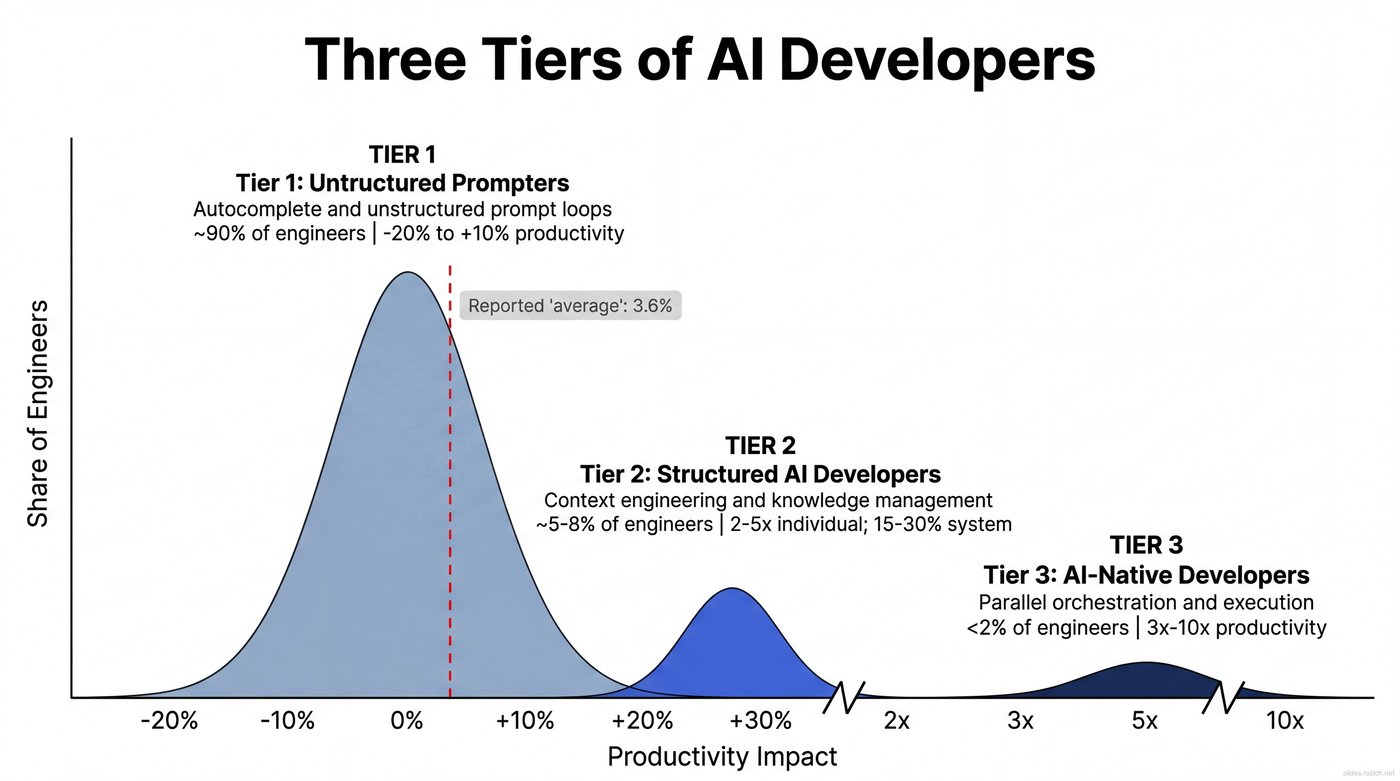

The pressure is coming from everywhere. Your board wants to know why engineering hasn't 10x'd yet. Your CEO saw a demo where two people built a product in a weekend. You're trying to plan next year's roadmap and wondering how much velocity to actually factor in. Meanwhile, small teams of two or three people are shipping products that used to take 50-person orgs months to build. A quarter of Y Combinator's latest batch has codebases that are 95%+ AI-generated.1 At the same time, the most rigorous study found experienced developers 19% slower with AI tools.2 A 30-million-commit analysis landed at 3.6%.3 A controlled Stanford study got 15-20%.4

There is no single number. The research contradicts itself because the average describes almost nobody — the gains cluster at the extremes.5 What actually determines the outcome isn't the tool. It's how your engineers work with it, how your teams and processes adapt around it, and whether you're willing to treat this as an organizational transformation rather than a technology deployment.

This briefing covers three things:

- Where your engineers actually are — a practical framework for three tiers of AI adoption, what separates each level, and why 90% of your org is stuck at the bottom.

- Why the quality concerns are real but solvable — the data behind the slop, and why it's a workflow problem, not a tool problem.

- What the transformation looks like — why current approaches aren't working, what the path forward actually requires, and how to get started.

Three Classes of Engineers

Tier 1: Unstructured Prompters

You probably have a few engineers who've built impressive little utilities with AI — internal tools, pain-point fixers, side-quest projects that made the team's life easier. You held them up as examples of what's possible. But when those same engineers try to plug AI into their day-to-day work on large, established codebases, the magic disappears. They're still working the way they always have — tab-completion, ad-hoc prompting, accepting suggestions without reviewing every line. Trust goes up, scrutiny goes down.5

And this is where the frustration sets in. They find themselves repeating context mid-session about features they already discussed three prompts ago — and it's maddening. This is where your senior engineers conclude that AI is great for greenfield toys but doesn't work for real codebases. The most rigorous study found experienced developers at this level 19% slower with AI while feeling 20% faster2 — a 39-point perception gap. This is what adoption without discipline looks like. Your adoption dashboards report high usage numbers that mean almost nothing. Roughly 90% of engineers are here, producing somewhere between 20% less and 10% more than they would without AI.

Tier 2: Structured AI Engineers

The approach flips. The agent session becomes the work surface — not the IDE. These engineers write plans before code. They curate context deliberately, giving the agent what it needs to succeed instead of cleaning up after it fails.5 They've invested in context engineering: building systems that give AI the domain knowledge, codebase context, and constraints it needs to produce production-quality output.6

The engineers who make it here have recognized that the pain they felt in Tier 1 wasn't an AI limitation — it was a workflow problem. The mental model shifts from "I write code and AI helps" to "I design systems and AI executes."7 Maybe 5-8% of engineers have made this transition — producing 2-5x the raw output, with net productivity gains of roughly 1.3-1.5x after rework. The companion brief, Beyond Prompt and Pray: A Field Guide to Structured AI Engineering, covers the workflow mechanics in detail.

Tier 3: AI-Native Developers

A Tier 2 engineer gets bored waiting for one agent to finish, so they fire up another. Then another. They find themselves multiplexing between them — and they can't stop. They quickly reach an equilibrium where every agent is waiting for them because somebody's always finished.5 Now delegation is a core skill, and without structured workflows and good context engineering, the whole thing falls apart.

The ones who push through manage teams of AI agents the way a senior engineer manages a team of developers — multiple agent sessions working simultaneously on different parts of a problem. They operate as full-stack builders: product, design, engineering, and QA in a single role. Less than 2% of engineers are here, but they're producing 3-10x what they did before.

The critical transition is from Tier 1 to Tier 2 — where the workflow fundamentally changes. Everything at Tier 1 is the old model with AI bolted on. Everything above it is a new operating model.

The Quality Question

The difference between slop and production-quality AI code is the workflow around it. Tier 2 engineers build quality in through process — not through hope or heroic code review. Context engineering gives agents the architectural constraints, coding standards, and domain knowledge they need before they write a single line. Automated test generation — something AI excels at — means every feature ships with regression coverage that most human-developed codebases never had in the first place. CI/CD catches what slips through.

The risks are real when this process is missing. AI-generated code introduces 15-18% more security vulnerabilities without proper guardrails.8 Engineers who delegate without engaging conceptually show a 17% decrease in coding mastery.9 Amazon's AI coding agent followed an outdated internal wiki and caused four Sev1 incidents in a single week.10 These are all Tier 1 failures — tool adoption without workflow change.

With the right process, AI-assisted code can be as reliable as, or more reliable than, what your teams produce today.11 Your teams already ship bugs without AI. The question isn't whether AI introduces risk — it's whether the process around it is better than what you have now.

The Transformation

The tiers describe where people are. Moving them up requires change management across three dimensions: people, process, and technology.

People

The paradox: senior engineers get dramatically more out of AI — nearly 5x the productivity gains of juniors.8 Fifteen to 30 years of architectural judgment, domain knowledge, and quality instincts is exactly what enables sophisticated context engineering. The senior engineer who understands why a code path is dangerous is the one who can give AI the context to avoid it.

And yet these same engineers are the most likely to resist. Many spent years mastering specialized skills that became core to their identity — accepting AI means letting go of the identity wrapped up in that expertise.5 Many tried AI tools when the models were genuinely limited, had a mediocre experience, and concluded "this is where it tops out." Because these are your most respected people, their opinion carries enormous weight. When a staff engineer says "I tried it, it made me slower," that lands harder than any executive memo.

Here's the thing: the gap between major model releases has gone from about four months to two months.5 The tools that frustrated them a year ago bear almost no resemblance to what's shipping now. They're not wrong about what they experienced. They're wrong about extrapolating from it.

The productivity reality: most companies are already over-subscribed against their desired roadmap. AI-augmented engineers don't just work faster — they unlock capacity that didn't exist before. The real opportunity isn't doing the same with less — it's doing dramatically more. Ship the backlog that's been sitting for two years. Launch the products you haven't had capacity for. The organizations that win will use the velocity to take market share, not just cut costs.

Process

Why Current Approaches Aren't Working

Most organizations are trying to solve this with AI literacy courses and tool rollouts. Get everyone a Copilot license, schedule a lunch-and-learn, maybe bring in a vendor for a half-day workshop. Then wonder why nothing changes.

These approaches fail because they treat AI adoption as a technology problem — learn the tool, get the gains. But the transition from Tier 1 to Tier 2 isn't about knowing which buttons to press. It's a fundamental change in how an engineer approaches their work: how they decompose problems, how they manage context, how they think about the relationship between planning and execution. That's a workflow and mindset shift, not a skills gap that closes with training. It takes sustained practice with real projects and, for most engineers, coaching from someone who's already made the transition.

The other common approach — mandating AI usage from the top — creates a different problem. Engineers comply by running prompts through Copilot and accepting whatever comes back. Adoption dashboards go up. Quality goes sideways or down. The engineers who were skeptical feel vindicated. The mandate becomes evidence that "we tried AI and it didn't work."

The Factory Floor Problem

Rolling out AI tool licenses without changing the rest of the workflow is like the early electrification of factories — they bolted electric motors onto the same belt-and-shaft layouts they'd used with steam engines and saw almost no productivity gains. It took a generation before manufacturers realized the whole factory floor had to be redesigned around the new technology.12 The numbers look the same today: PRs merged per developer up 98%, but review time up 91% and bugs per developer up 9%.13 Code is being generated faster than the rest of the pipeline can absorb it.

The organizations seeing the biggest gains are automating the entire SDLC — not just code generation, but requirements analysis, test generation, code review, and deployment automation.1415 Code generation is no longer the bottleneck. Everything around it is.

There's a team topology problem too. Put six or seven Tier 2 engineers working at AI speed in the same monolithic repo and you'll drown in merge conflicts and code review overhead. The coordination cost scales with both team size and output velocity. The early evidence points toward AI-augmented pods — small, autonomous teams with agent fleets, each owning a bounded service and shipping independently. This isn't about reducing team size for its own sake — it's about enabling parallelism. Ten pods shipping independently move faster than one large team coordinating across a monolith.

The Cognitive Reality

There's one more thing that makes this hard to scale naively. AI eliminates the routine work — boilerplate, rote fixes, tasks you could do on autopilot — and replaces it with nothing but deep thinking. Architecture calls. Constant evaluation of agent output. Judgment that can't be delegated. Steve Yegge calls this the "AI Vampire" — it drains your cognitive energy even as your output skyrockets.5

An engineer's best hours with structured AI workflows are intensely productive — but they may only sustain three or four of them a day. The routine work that AI replaced wasn't just filler; it was cognitive recovery time. Engineers used to switch between deep problem-solving and lighter tasks naturally. When every hour is redline, burnout happens faster.

This isn't a reason not to adopt. Those three or four peak hours produce more than a full week used to. But it's a reason you can't just tell 20 engineers to "use AI more" and expect them all to sustain it. The intensity is part of why phased adoption matters — and why the engineers who go first need support, not just tools.

Technology

The gap between major model releases has compressed from four months to two months.5 A six-week procurement cycle means your engineers are always an iteration behind. A multi-month one means they're working with tools that bear little resemblance to what's currently shipping.

This is not the same market as your corporate productivity suite. More research, brainpower, and investment dollars are going into AI for software engineering than any other use case right now. The tools your legal team already approved for the accounting department are not the engineering frontier. Your engineers need the ability to try the best available tools and stay current as the landscape shifts — not wait for enterprise procurement to catch up.

Track token burn, not adoption dashboards. Token burn — how many tokens your engineers consume — is the best proxy for whether your team is actually transforming or just checking a box. Jensen Huang told his GTC audience that if an engineer isn't consuming at least $250,000 worth of tokens a year, "I am going to be deeply alarmed."16 Tomasz Tunguz frames inference as the fourth component of engineering compensation — salary, bonus, equity, and tokens — and estimates the 75th percentile engineer may soon carry $100K in annual token costs on top of a $375K package.17 It's not a perfect metric — Goodhart's Law applies18 — but it's the best signal available today for distinguishing surface-level adoption from genuine workflow change. Frame the cost as R&D, not overhead.

The Path Forward

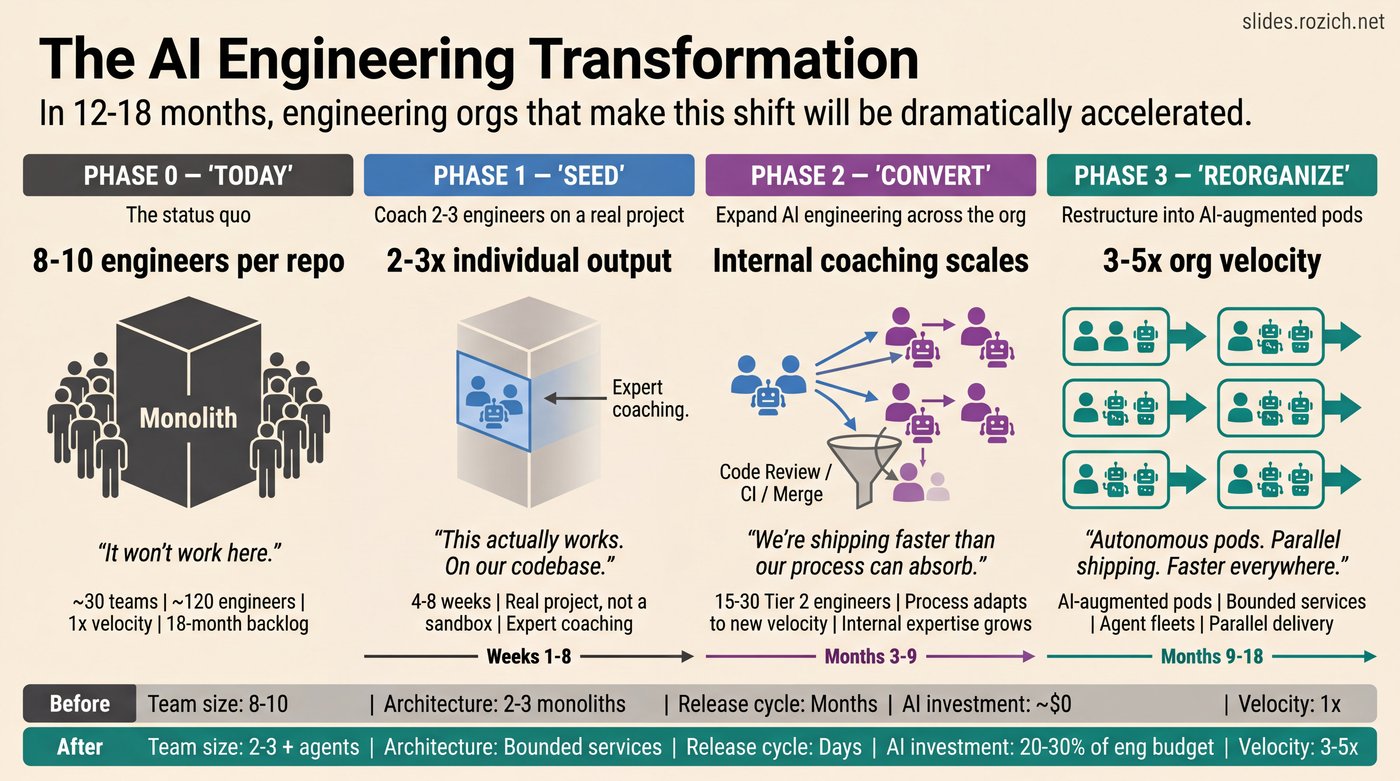

Most companies are trying to jump straight to org-wide transformation — mandates, training programs, enterprise tool rollouts. That doesn't work for the reasons above. But doing nothing isn't an option either. The path is phased, and each phase builds the foundation for the next.

Phase 1: Seed

Find two or three of your most adaptable engineers. Give them a real project — not a sandbox, not a hackathon — and the best available tools. Expect 4-8 weeks of reduced output during the transition. Unless you have someone in-house who's already deep in structured AI workflows, bring in outside expertise to accelerate the learning curve. Trying to figure this out from blog posts and documentation alone is possible but slow — an experienced practitioner compresses months of trial and error into weeks.

The goal isn't just output — though 2-3x individual productivity gains are realistic even in the first engagement.4 It's building firsthand evidence of what the transition actually looks like: what changes, what's hard, what the real gains are on your codebase, with your constraints. The ROI on these first engineers goes well beyond their individual output.

Streamline procurement for the tiger team. They need access to the top-tier tools now, not after a multi-month approval cycle. Right now, Claude Code is the best tool in the space — but the landscape moves fast. Build a procurement path that can keep up.19

Phase 2: Convert

The engineers who come through Phase 1 become your most valuable asset in the transformation — not because of what they produce, but because of what they know. They've been through the shift. They can articulate what changed in a way that no outside consultant or training program can. They understand your codebase, your team's culture, and the specific friction points that made it hard.

These engineers become internal coaches. They pair with the next cohort. They demonstrate what structured AI engineering looks like on real work — not slides, not a workshop, but the daily practice. This is how adoption actually spreads: engineer to engineer, grounded in credibility earned from doing the work on the same codebase everyone else touches.

As more engineers convert, the bottleneck shifts. It's no longer "can our engineers work this way?" — it's "can our processes keep up?" Code review, CI pipelines, merge workflows, and release cadences all need to adapt to the new velocity. This is a good problem to have. It means the people side is working and the organization is ready for the next step.

Phase 3: Reorganize

With a growing cohort of engineers operating at Tier 2, you have enough signal to restructure. The early evidence points toward AI-augmented pods — small, autonomous teams of two or three engineers with agent fleets, each owning a bounded service and shipping independently. Decomposing monoliths into bounded services enables this parallelism. If your codebase is a sprawling monolith, agents will struggle with it regardless — the practical ceiling is roughly half a million to five million lines in a single context.5 The good news: re-architecting into bounded services is exactly the kind of work AI dramatically accelerates.

The result isn't fewer people doing the same work — it's the same people doing dramatically more. Autonomous pods shipping in parallel, each moving at 3-5x the velocity of the old model. The capacity that opens up is a strategic asset: clear the backlog, launch new products, enter new markets. But just like the factory floor had to be redesigned around electricity — not just the motors swapped out — you can't design the new floor until you've run the first few machines.

Make This a Roadmap Conversation

If you're suddenly 3-5x more productive, how strong is your roadmap and your ability to turn that capacity into real value? The bottleneck shifts from engineering to product and strategy. Could you even absorb 5x the engineering output in a meaningful way? That's a conversation for your product and strategy leads, not just your CFO. The question isn't about headcount — it's "what can we build now that we couldn't before?"

Treat resistance as a strategic risk. Startups of 2-20 people leveraging AI are beginning to rival the output of large engineering orgs. If you let adoption stall, a smaller AI-native competitor will outmaneuver you — not because they have better engineers, but because their engineers are dramatically more productive.20 The path isn't easy — it requires rethinking how you organize work and how you measure performance. But the first step is small: seed the shift with two or three engineers on a real project. Everything else follows from there.

Footnotes

Footnotes

-

Garry Tan, Y Combinator W25 batch, March 2025. A quarter of startups in the current cohort have codebases that are 95%+ AI-generated. https://techcrunch.com/2025/03/06/a-quarter-of-startups-in-ycs-current-cohort-have-codebases-that-are-almost-entirely-ai-generated/ ↩

-

METR, "Measuring the Impact of AI on Developer Productivity," July 2025. Randomized controlled trial, 16 experienced developers, 246 real tasks. Developers were 19% slower with AI tools while simultaneously believing they were 20% faster — a 39-point perception gap. A February 2026 update found an increasing share of developers now refuse to work without AI tools, even when paid to do so. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/ ↩ ↩2

-

Wenzhi Ding et al., "Who is using AI to code? Global diffusion and impact of generative AI," Science, January 2026 (30M+ commits, 160K developers). Average productivity increase of just 3.6%, with gains going almost entirely to experienced developers. https://www.science.org/doi/10.1126/science.adz9311 ↩

-

Stanford study on AI's impact on developer productivity, June-July 2025. Raw code output significantly higher, but net productivity gains

15-20% due to rework. At even a conservative 1.3x net gain, a 50-person engineering org investing 4-8 weeks of 30-50% reduced output during transition ($400K-$800K at $200K fully loaded per engineer) pays for itself within 6-9 months. See also Dex Horthy: "A lot of the 'extra code' shipped by AI tools ends up just reworking the slop that was shipped last week." https://www.youtube.com/watch?v=tbDDYKRFjhk ↩ ↩2 -

Steve Yegge, "The 8 levels of AI coding adoption" and "The AI Vampire," 2026. Yegge's eight-level adoption ladder maps the detailed journey between the three tiers described in this briefing: Levels 1-4 correspond to Tier 1 (AI bolted on to existing workflows), Level 5 is the transition to Tier 2 (agent-first development where the workflow fundamentally changes), and Levels 6-8 correspond to Tier 3 (parallel orchestration of agent fleets). The critical transition is Level 4 to Level 5 — where the engineer stops coding in the IDE and starts designing in the agent session. Token burn (total tokens consumed) is the key adoption metric: high burn means active experimentation, low burn means surface-level adoption. The gap between major model releases has compressed from ~4 months to ~2 months; every bug and edge case feeds back into training, making the improvement curve recursive. Practical ceiling for effective agent use: ~500K-5M lines of code in a single context — monolithic codebases limit what agents can do. Pragmatic Engineer podcast: https://newsletter.pragmaticengineer.com/p/steve-yegge-on-ai-agents-and-the ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9

-

Dex Horthy, HumanLayer. "Advanced Context Engineering for Coding Agents," August 2025. The key insight: the difference between engineers who struggle with AI and engineers who thrive isn't prompting skill — it's context engineering. "Frequent intentional compaction" and designing workflows around context management. https://www.humanlayer.dev/blog/advanced-context-engineering ↩

-

Steve Yegge and Gene Kim, Vibe Coding, 2025. "Head chef" framing — the developer moves into the architect role, designing systems and reviewing output while AI handles execution. The shift from writing code to designing systems is the defining transition between Tier 1 and Tier 2. ↩

-

Opsera 2026 AI Coding Impact Benchmark Report (250K+ developers, 60+ enterprises). AI-generated code introduces 15-18% more security vulnerabilities. Senior engineers see nearly 5x the productivity gains of juniors — the experience and judgment that enables sophisticated context engineering is what drives the outsized returns. https://opsera.ai/resources/report/ai-coding-impact-2026-benchmark-report/ ↩ ↩2

-

Anthropic, "Does AI-Assistance on Coding Tasks Affect Mastery?" February 2026. 17% decrease in coding mastery for developers who delegated rather than engaged conceptually. https://www.anthropic.com/research/AI-assistance-coding-skills ↩

-

Amazon GenAI coding incidents, March 2026. Amazon Q followed an outdated internal wiki and corrupted delivery estimates — four Sev1 incidents in a single week, all linked to AI-assisted changes. Their response: a 90-day safety reset, mandatory senior sign-off on all AI-assisted production code, and two-person authorization for Tier-1 systems. But Amazon had major outages long before AI coding — the question is prevalence, not presence. Fortune: https://fortune.com/2026/03/18/ai-coding-risks-amazon-agents-enterprise/ ↩

-

Regulated environments require extra care but aren't a reason to wait. Proof-carrying pipelines integrating LLM output with static analysis, symbolic execution, and bounded model checking already exist in early tooling. The EU AI Act doesn't reach full enforcement until August 2026. Even SCIF-level environments are running local inference — the Army has deployed its Enterprise LLM Workspace to SIPRNet and higher classified networks. Computer Fraud and Security, 2025: https://computerfraudsecurity.com/index.php/journal/article/view/793 ↩

-

Amdahl's Law applied to software delivery: if code generation was 30% of time-to-ship and you make it 10x faster, overall improvement is ~25%, not 10x. The electrification analogy is instructive — early factories replaced steam engines with electric motors but kept the same belt-and-shaft layout. Productivity gains came only after firms adopted entirely new factory designs. See Paul David, "The Dynamo and the Computer" (1990). Robert Solow's productivity paradox — "You can see the computer age everywhere but in the productivity statistics" — resolved only when companies redesigned processes around the technology, not just adopted it. See Brynjolfsson and Hitt, "Beyond Computation" (2000). ↩

-

Faros AI Productivity Paradox Report (10K+ developers, 1,255 teams). PRs merged per developer up 98%, but average PR size up 154%, review time up 91%, and bugs per developer up 9%. AI-heavy teams deploy 3x more frequently, but 69% report increased deployment problems and 47% report increased manual QA (Harness, "2026 State of AI-Powered Development," March 2026). AI amplifies the strengths of high-performing organizations and the dysfunction of struggling ones (Google Cloud DORA Report, ~5,000 developers, July 2025). ↩

-

Anthropic Code Review, launched March 9, 2026. Multi-agent PR review system: specialized agents per issue class, adversarial verification step, <1% incorrect findings. GitHub Copilot has handled 60 million code reviews. The same AI agents that write code can now handle review, test generation, requirements analysis, and deployment automation. TechCrunch: https://techcrunch.com/2026/03/09/anthropic-launches-code-review-tool-to-check-flood-of-ai-generated-code/ ↩

-

Anthropic, "How AI is transforming work at Anthropic," December 2025. Requirements management, QA, CI/CD configuration, incident response — every phase of the SDLC that used to require a specialist is becoming something an agent can handle with the right context and oversight. https://www.anthropic.com/research/how-ai-is-transforming-work-at-anthropic ↩

-

Jensen Huang, NVIDIA GTC keynote, March 2026. "If that person did not consume at least $250,000 worth of tokens, I am going to be deeply alarmed." Huang suggested companies may soon assign token budgets to engineers just like salaries or project resources, and estimated that engineer token allocations could reach half of base salary in value. ↩

-

Tomasz Tunguz, "Will I Be Paid in Tokens?" (tomtunguz.com, March 2026). Frames AI inference as the fourth component of engineering compensation: salary, bonus, options, and inference costs. Pegs the 75th percentile engineer salary at $375K and estimates $100K in inference brings fully loaded cost to $475K — 21% in tokens. The key CFO question becomes productive work per dollar of inference. https://tomtunguz.com/inference-as-compensation/. See also Kevin Roose, "Tokenmaxxing" (New York Times, March 21, 2026) — an OpenAI engineer processed 210 billion tokens in a single week; a single Claude Code user racked up over $150,000 in monthly costs. ↩

-

On the limits of token burn as a metric: PYMNTS.com, "AI Adoption Is Being Measured in Tokens, but the Metric Falls Short" (March 2026) draws comparisons to earlier enterprise metrics that proved easier to game than interpret, invoking Goodhart's Law. Glen Rhodes argues that measuring AI adoption by token burn is measuring output, not outcomes — "a senior engineer who burns $250K in tokens and ships garbage architecture is not a win, while one who burns $40K and cuts release cycles in half is a hero." https://www.pymnts.com/artificial-intelligence-2/2026/ai-adoption-is-being-measured-in-tokens-but-the-metric-falls-short-experts-say/; https://glenrhodes.com/hot-take-on-the-500k-engineer-should-burn-250k-in-tokens-quote-circulating-on-twitter/ ↩

-

Nvidia deployed Cursor across 30,000+ engineers and achieved 3x committed code volume with flat bug rates (https://cursor.com/blog/nvidia). Tenex cut engineering 90% and hit 10x output (Latent Space podcast, November 2025). Mercor went from $1M to $500M ARR in 17 months with a tiny team (Lenny's Podcast, September 2025). But you don't start by trying to be Nvidia — you start with two or three engineers, a real problem, and the right tools. ↩

-

Shopify CEO Tobi Lutke's AI memo (April 2025) made AI adoption a performance expectation — teams must prove AI can't handle a task before requesting headcount. An increasing number of companies are following this approach. https://www.cnbc.com/2025/04/07/shopify-ceo-prove-ai-cant-do-jobs-before-asking-for-more-headcount.html ↩